Discrete Probability Distributions

A probability distribution is a function or rule that describes how probability is spread across the possible values of a random variable.

A discrete probability distribution is a probability distribution for a discrete random variable, meaning the variable can take only a countable set of distinct values

Probability Mass Function (PMF)

This is the mathematical tool or function used to define that distribution.

Definition:

A Probability Mass Function (PMF) is a probability distribution function or rule that defines the probability of occurrence of each value of discrete random variable in sample space (

Mathematically, it gives the probability that a discrete random variable (

Properties:

- Non-negativity:

for all possible values of in the state space. - Normalization: The sum of the probabilities for all possible values must equal 1:

- Support:

only for in the set of possible values (the support) of .

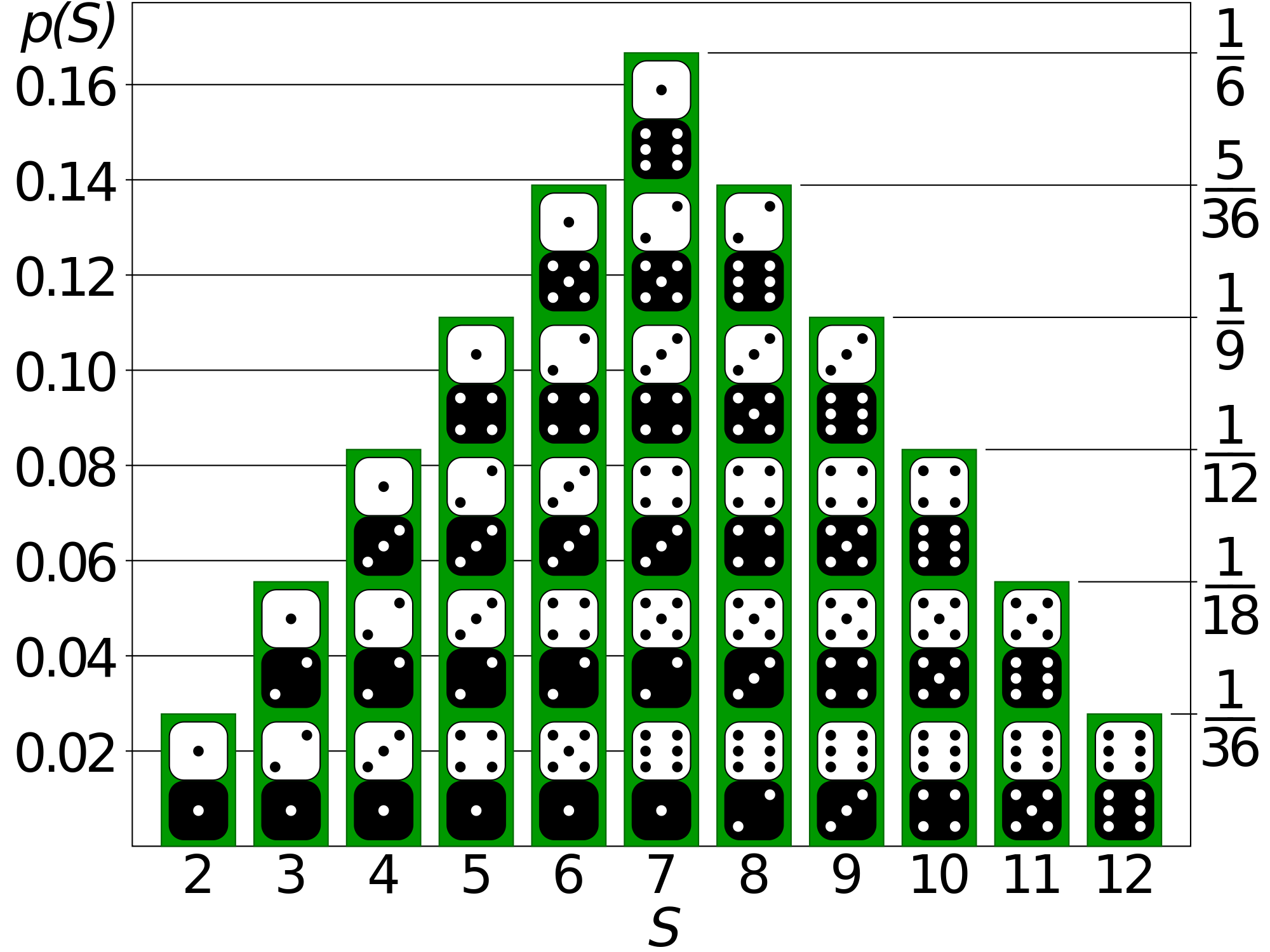

Example: Rolling Two Fair Die

- Experiment: Roll two fair six-sided dice.

- Sample Space:

- Number of outcomes:

- Number of outcomes:

- Random Variable (

): Sum of the two dice, - Possible values:

- Possible values:

- Let

= Number of pairs such that - e.g.,

(5 pairs: (1,5), (2,4), (3,3), (4,2), (5,1))

- e.g.,

- PMF:

- e.g.,

★ Common Discrete Distributions

1. The Bernoulli Distribution

The Bernoulli distribution is the simplest of all. It models an experiment with only two possible outcomes, which we typically label as "success" and "failure."

- The Experiment: A single trial.

- The Outcomes:

- Success (e.g., getting heads, finding a defective item, a customer clicking an ad).

- Failure (e.g., getting tails, finding a good item, a customer not clicking).

- The Random Variable:

for a success. for a failure.

- The Parameter:

: the probability of success.

- The PMF:

Example:

Probability of getting the Job offer. Let's say the probability of "yes" is

. .

2. The Binomial Distribution

What happens if we repeat a Bernoulli trial multiple times? That's where the Binomial distribution comes in. It models the number of successes in a fixed number of independent Bernoulli trials.

- The Experiment: Performing

independent Bernoulli trials. - The Random Variable (

): The total number of successes in the trials. - The Parameters:

: the total number of trials. : the probability of success on any single trial.

- The PMF: The probability of getting exactly

successes in trials is:$$P(X=k) = \binom{n}{k} p^k (1-p)^{n-k}$$

Whereis the combination "n choose k," which counts the number of ways to arrange the successes among the trials.

Example:

You flip a fair coin (

So, there is a 31.25% chance of getting exactly 3 heads in 5 flips.

3. The Poisson Distribution

The Poisson distribution models the number of times an event occurs over a fixed interval of time or space, when we know the average rate at which the event occurs.

- The Experiment: Counting the number of occurrences of an event in a fixed interval.

- The Random Variable (

): The number of events in that interval. - The Parameter:

(lambda): the average number of events per interval.

- The PMF: The probability of observing exactly

events is: Where is the base of the natural logarithm ( ).

Example:

A call center receives an average of 10 calls per hour (

So, there is about a 9% chance of receiving exactly 7 calls in that hour.

4. The Discrete Uniform Distribution

Discrete Uniform Distribution is a Probability Distribution that describes the likelihood of outcomes when each outcome in a finite set is equally likely.

It's characterized by a constant probability mass function (PMF) over a finite range of values.

Example: Rolling a Fair Die

- When rolling a fair six-sided die, each face (1, 2, 3, 4, 5, 6) has an equal probability of

of landing face up.