Derivatives for Machine Learning

1. Why Do We Need Derivatives in Machine Learning?

In machine learning, our primary goal is to optimize a model by minimizing a loss function. This loss function measures how "wrong" our model's predictions are.

The Core Idea: Derivatives tell us the slope of the loss function. By knowing the slope, we can determine the direction to adjust our model's parameters (weights and biases) to reduce the loss. This iterative process is called Gradient Descent.

flowchart TD

A[Model makes a prediction] --> B{Calculate Loss};

B --> C[Calculate Derivative of Loss];

C --> D{Is slope steep?};

D -- Yes --> E[Take a big step 'downhill'];

D -- No --> F[Take a small step 'downhill'];

E --> G[Update Model Parameters];

F --> G;

G --> A;

subgraph Optimization Loop

A

B

C

D

E

F

G

end2. What is a Derivative?

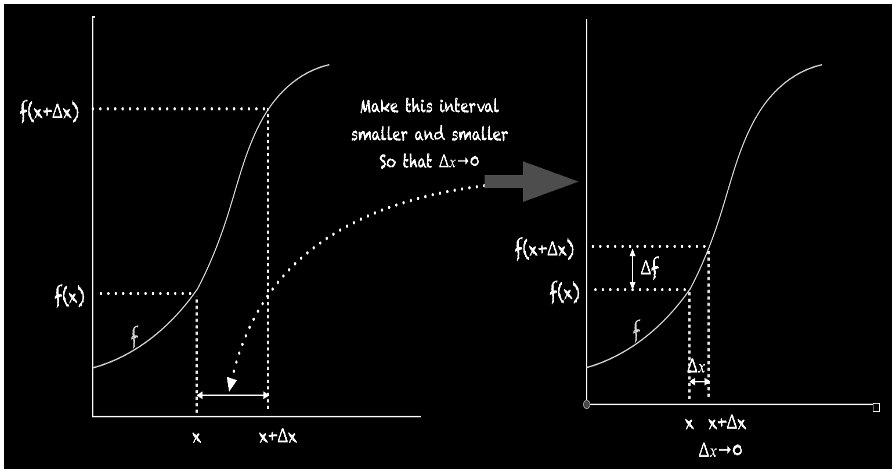

A derivative measures the instantaneous rate of change of a function with respect to one of its variables — it is the slope of the tangent line at a specific point.

- Notation:

or - Limit Definition:

As the gap

The derivative at a point is the slope of the tangent line at that point.

3. Differentiability: When Can We Find a Derivative?

A function

| Condition | Why it fails | Visual |

|---|---|---|

| Discontinuity | The function has a jump or hole — no single tangent exists. | !250 |

| Sharp Corner / Cusp | Slope changes abruptly — left-hand and right-hand slopes differ. | !250 |

| Vertical Tangent | The tangent line is vertical — slope is infinite (undefined). | !250 |

ML Note: This is why activation functions like ReLU (which has a corner at 0) require special handling. Sub-gradients are used in practice.

4. First and Second-Order Derivatives

4.1 First-Order Derivative — Slope & Direction

Represents the slope of the tangent to the function at a point, indicating how rapidly the function is changing.

The first derivative

| Meaning | |

|---|---|

| Function is increasing | |

| Function is decreasing | |

| Critical point — Indicating local maxima, minima, or saddle points. |

ML Context: Gradient descent moves in the opposite direction of

to descend toward a minimum.

4.2 Second-Order Derivative — Curvature & Concavity

The second derivative

| Meaning | Shape | |

|---|---|---|

| Concave Up (convex) | Bowl |

|

| Concave Down (concave) | Hill |

|

| Concavity may be changing | Possible inflection point |

ML Context: Second-order methods (e.g., Newton's method) use

to take smarter steps but are expensive to compute at scale.

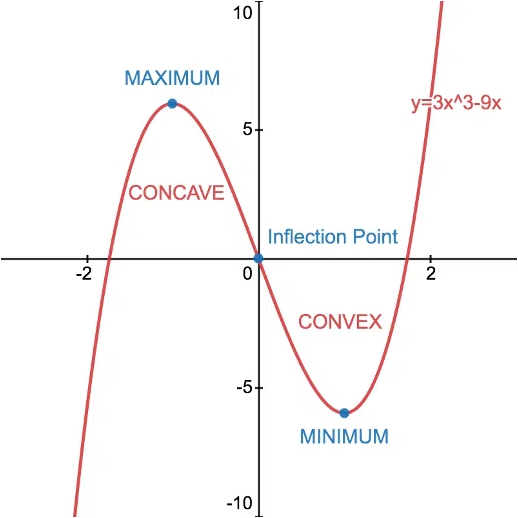

4.3 Concave vs. Convex Functions

Convex Function (bowl-shaped

- The graph lies below or on the chord joining any two points on the curve.

everywhere. - Has a single global minimum — ideal for optimization.

Concave Function (hill-shaped

- The graph lies above or on the chord joining any two points on the curve.

everywhere. - Has a single global maximum.

ML Context: Loss functions that are convex (e.g., MSE with linear models, logistic loss) guarantee that gradient descent will find the global minimum. Deep neural network loss surfaces are generally non-convex, making optimization harder.

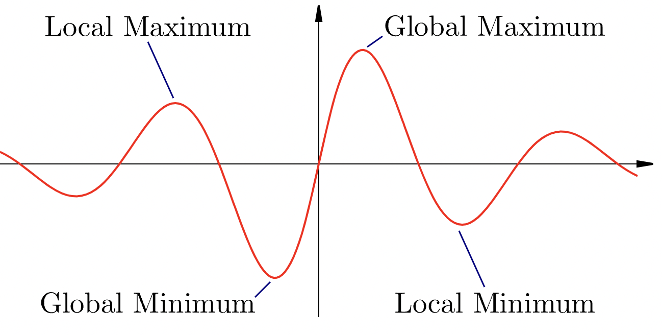

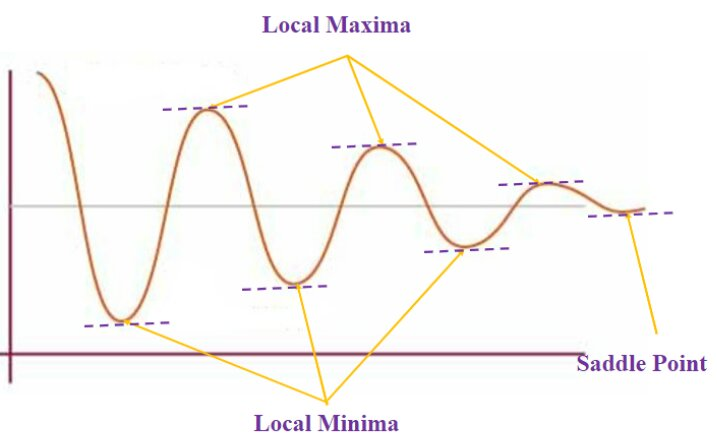

5. Critical Points: Maxima, Minima, and Saddle Points

The goal of optimization is to find the minimum of the loss function. Critical points (where

| Type | Description | ||

|---|---|---|---|

| Local Minimum | Lowest point in a neighborhood | ||

| Local Maximum | Highest point in a neighborhood | ||

| Global Minimum | Absolute lowest over entire domain | ||

| Global Maximum | Absolute highest over entire domain | ||

| Saddle Point | Critical point that is neither min nor max | ||

| Inflection Point | Concavity changes sign | — |

ML Challenge: Gradient descent can get "stuck" in a local minimum or slow down near saddle points — both common in high-dimensional loss surfaces. Techniques like momentum, Adam, and learning rate schedules help escape these.

VI. Worked Example

Step 1 — Find the first and second derivatives

Step 2 — Find critical points (set

Step 3 — Classify critical points using

| Critical Point | Classification | |

|---|---|---|

| Local Maximum | ||

| Local Minimum |

Step 4 — Increasing / Decreasing intervals

Using the sign of

| Interval | Behaviour | |

|---|---|---|

| Decreasing | ||

| Decreasing | ||

| Increasing |

Step 5 — Concavity and inflection point

Set

| Interval | Concavity | |

|---|---|---|

| Concave Down | ||

| Concave Up |

Inflection point at

VII. Summary: Why Derivatives are Essential for ML

| Concept | Role in ML |

|---|---|

| First Derivative | Gives direction & magnitude of slope — drives gradient descent |

| Gradient |

Vector of partial derivatives — tells us how to update all weights |

| Second Derivative | Reveals curvature — used in advanced optimizers (Newton's method) |

| Convexity | Convex loss surfaces guarantee a global minimum |

| Chain Rule | Powers backpropagation — makes deep network training feasible |

| Critical Points | Identify minima, maxima, and saddle points in the loss surface |

VIII. Question and Answers

-

A data scientist is analyzing a function f(x) and wants to determine if it is differentiable at a certain point. Which of the following conditions must be met for a function to be differentiable at a point?

- The function must be continuous at the point.

- The function must have a defined slope at the point.

- The limit of the difference quotient as x approaches the point must exist.

Explanation - Differentiability of a function at a point implies that the function has a defined slope at that point. The slope is given by the derivative of the function at that point.

- To determine if a function is differentiable at a certain point, we need to check if the function satisfies the following conditions:

- The function must be continuous at the point. This means that the value of the function at the point should be defined and the limit of the function as x approaches the point should exist and be equal to the value of thefunction at the point.

- The function must have a defined slope at the point. This means that the limit of the difference quotient as x approaches the point must exist. The difference quotient is given by (f(x) - f(a))/(x - a), where a is the point of interest.

- If both of the above conditions are met, then the function is differentiable at the point.

-

Consider the function f(x) = x^3 - 6x^2 + 9x. Which of the following statements is true?

The function has a local minimum at x = 3.

Explanation- To find the minimum of the function, we take the derivative f' (x) = 3x^2 - 12x + 9 and set it to zero. Solving for x,we get x = 1 and x = 3.

- We then evaluate the second derivative f"(x) = 6x - 12 at each of these points to determine whether they correspond to a minimum, maximum, or saddle point.

- At x = 1, f"(x) = -6, which means that it is a local maximum.

- At x = 3, f"(x) = 6, which means that it is a local minimum.

-

Given a function g(x)=x^3 - x^2 −5x+2, what is the critical point(s) of this function?

X=-1

Explanation

To find critical points, we calculate the derivative of the function g'(x) and solve for x such that g'(x) =0. In this case, the derivative is g' (x) =3x^2 -2x-5. Setting this equal to zero and solving yields x=-1 as the critical point.